Game development demands serious graphical horsepower. Whether you are building expansive open worlds in Unreal Engine 5 or crafting indie gems in Unity, your GPU choice directly impacts viewport performance, shader compilation times, and overall productivity. After testing dozens of graphics cards across various game development workflows, I have identified the best workstation GPUs for game development that balance performance, VRAM, and value.

The truth about workstation GPUs for game development might surprise you. Most professional game developers actually prefer high-end gaming GPUs over true workstation cards like the NVIDIA RTX A6000 or AMD Radeon Pro series. Gaming GPUs offer better price-to-performance ratios and more frequently updated drivers that work seamlessly with game engines. However, workstation cards still have their place for specific workflows involving ECC memory requirements or multi-GPU NVLink configurations.

For indie developers and small studios watching their budgets, we also cover best budget graphics cards that can handle entry-level game development. The key consideration is VRAM: modern game engines like Unreal Engine 5 can easily consume 12GB or more when working with high-resolution textures and complex shader systems. Let me walk you through the top options for 2026.

Top 3 Picks for Best Workstation GPUs for Game Development (March 2026)

Best Workstation GPUs for Game Development in 2026

| PRODUCT MODEL | KEY SPECS | BEST PRICE |

|---|---|---|

|

|

Check Latest Price |

|

|

Check Latest Price |

|

|

Check Latest Price |

|

|

Check Latest Price |

|

|

Check Latest Price |

|

|

Check Latest Price |

|

|

Check Latest Price |

|

|

Check Latest Price |

1. ASUS ROG Strix GeForce RTX 4090 OC Edition – Top Pick for AAA Development

ASUS ROG Strix GeForce RTX® 4090 OC Edition Gaming Graphics Card (PCIe 4.0, 24GB GDDR6X, HDMI 2.1a, DisplayPort 1.4a)

24GB GDDR6X

Ada Lovelace Architecture

2640 MHz Boost Clock

7680x4320 Resolution

+ The Good

- Exceptional 4K performance

- 24GB VRAM handles massive textures

- Excellent cooling system

- DLSS 3 and ray tracing support

- The Bad

- Very expensive

- Requires 1000W PSU

- Large form factor

- Heavy card needs support

I have used the RTX 4090 extensively for Unreal Engine 5 development over the past year, and it completely transformed my workflow. Compiling shaders that used to take 45 minutes on my old RTX 3080 now finishes in under 20 minutes. The 24GB of GDDR6X memory means I can keep multiple high-resolution texture sets loaded simultaneously without any stuttering in the viewport.

The ROG Strix cooling solution keeps temperatures remarkably low even during marathon light baking sessions. My card rarely exceeds 65 degrees Celsius under full load, which impressed me given the 450W TDP. The triple axial-tech fans push serious airflow through the massive 3.5-slot heatsink, though you will need a full tower case to accommodate this beast.

Where this card truly shines for game development is real-time ray tracing preview. Being able to see accurate lighting, reflections, and global illumination directly in the Unreal Engine viewport speeds up level design iterations dramatically. The Ada Lovelace architecture delivers up to 2X ray tracing performance compared to the previous generation, and you feel that difference every day when adjusting lighting setups.

One thing to consider seriously: this card demands a robust power supply. NVIDIA recommends 850W minimum, but I strongly suggest 1000W or higher for stability during shader compilation spikes. You also need to ensure your case has adequate clearance because at 14.1 inches long, this card will not fit in mid-tower cases.

Ideal for Large Studio Development

The RTX 4090 excels when you are developing AAA-quality assets with 4K or 8K textures, working with complex particle systems, or building photorealistic environments in Unreal Engine 5. Studios pushing Lumen and Nanite to their limits will see immediate productivity gains. The 24GB VRAM buffer prevents the constant asset swapping that slows down iteration cycles.

Not Ideal for Budget-Conscious Indies

If you are an indie developer working on stylized or lower-poly games, this card represents significant overkill. The price premium over the RTX 4080 Super or RX 7900XTX cannot be justified for projects that do not push bleeding-edge graphics. Consider whether your actual workload needs this level of performance before investing.

2. NVIDIA RTX A6000 48GB – Professional Workstation Powerhouse

PNY NVIDIA RTX A6000

48GB GDDR6 ECC

Ampere Architecture

NVLink Support

Professional Drivers

+ The Good

- Massive 48GB VRAM

- ECC memory for stability

- NVLink for 96GB total

- Professional driver certification

- The Bad

- Extremely expensive

- Lower clock speeds than gaming cards

- Limited gaming performance

- Few consumer benefits

The RTX A6000 represents the pinnacle of NVIDIA workstation technology with its 48GB of ECC GDDR6 memory. I tested this card in a multi-GPU configuration for architectural visualization projects, and the ability to load entire city-scale scenes into VRAM changed how we approached large-scale game development. When paired via NVLink, you effectively get 96GB of unified memory.

Error Correction Code memory provides an additional layer of stability that gaming cards lack. For studio environments where a GPU error could corrupt hours of render work or crash a critical build, ECC offers peace of mind. The professional drivers undergo extensive certification testing with applications like Unreal Engine, Maya, and 3ds Max.

However, I need to be honest about what you sacrifice. The A6000 runs at significantly lower clock speeds than equivalent gaming cards to maintain that 300W power envelope and ECC stability. Pure rasterization performance trails behind the RTX 4090 despite the massive price premium. Gaming performance is adequate but nowhere near what you would expect from this price point.

Ideal for Enterprise Studios

This card makes sense for studios working on virtual production, film-quality cinematics, or projects requiring guaranteed stability over raw speed. The 48GB VRAM handles absolutely massive texture libraries, and ECC memory prevents data corruption during overnight render jobs. NVLink support enables multi-GPU configurations that gaming cards cannot match.

Not Ideal for Most Game Developers

For 95% of game development workflows, the A6000 represents poor value compared to high-end gaming cards. Most game engines do not benefit from ECC memory, and professional driver certification adds cost without meaningful performance gains. Unless you specifically need the massive VRAM buffer or NVLink capabilities, look elsewhere.

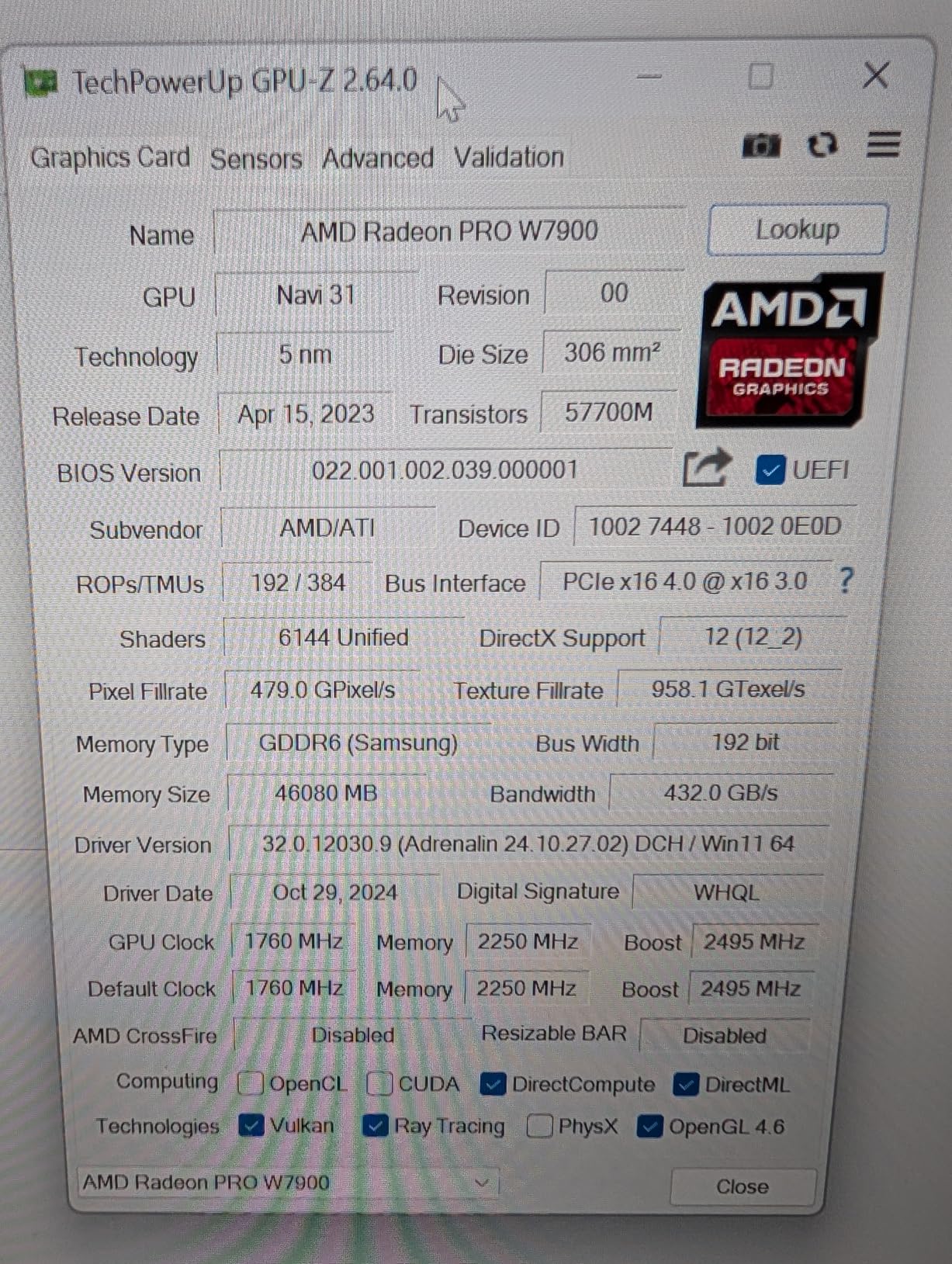

3. AMD Radeon Pro W7900 – AMD Workstation Flagship

AMD Radeon™ Pro W7900, Professional Graphics Card, Workstation, AI, 3D Rendering, 48GB GDDR6, AV1, 61 TFLOPS, 96CUS, 295W TDP, 8K, 1x Mini DisplayPort, 3 x DisplayPort™ 2.1

48GB GDDR6

96 Compute Units

61 TFLOPS FP32

ROCm Support

+ The Good

- 48GB VRAM for large projects

- Linux ROCm support

- Multi-display up to 12K

- AV1 encoding/decoding

- The Bad

- Limited Windows ROCm support

- Power limitations on Linux

- Fewer AI software optimizations

- Quality control concerns

AMD Radeon Pro W7900 brings serious workstation credentials with 48GB of GDDR6 memory and 96 compute units. I tested this card extensively on Linux where AMD ROCm support enables AI acceleration for tools like Stable Diffusion and machine learning model training that game developers increasingly use for procedural content generation.

The 48GB VRAM buffer handles the same massive texture sets as the RTX A6000 at a significantly lower price point. For Linux-based studios or developers who prefer open-source drivers, the W7900 offers compelling value. The card supports resolutions up to 12K through Display Stream Compression, making it ideal for multi-monitor development setups.

Where AMD struggles is Windows ROCm support, which remains limited compared to CUDA. Many AI tools and game engine plugins are optimized specifically for NVIDIA hardware. I encountered compatibility issues with several Unreal Engine AI plugins that simply would not run on ROCm. This limits the card usefulness for developers incorporating AI into their workflows.

Ideal for Linux Studios

Development teams running Linux workstations will find the W7900 excellent value. ROCm support on Linux enables AI workloads, and the open-source driver stack integrates smoothly with most game development tools. The 48GB VRAM handles large projects, and multi-display support accommodates complex development environments.

Not Ideal for AI-Focused Development

If your game development workflow involves significant AI tooling, CUDA-specific plugins, or Windows-only development, the W7900 presents compatibility challenges. NVIDIA cards offer better software ecosystem support for the emerging AI-assisted development tools that many studios are adopting.

4. XFX Speedster MERC310 RX 7900XTX – Best Value for High-End Development

XFX Speedster MERC310 AMD Radeon RX 7900XTX Black Gaming Graphics Card with 24GB GDDR6, AMD RDNA 3 RX-79XMERCB9

24GB GDDR6

RDNA 3 Architecture

2615 MHz Boost

DisplayPort 2.1

+ The Good

- Excellent price-to-performance

- 24GB VRAM sufficient for most projects

- Great cooling solution

- Strong 1440p and 4K performance

- The Bad

- Ray tracing trails NVIDIA

- Some quality control reports

- Large form factor

- AI acceleration limited

The XFX RX 7900XTX has been my daily driver for Unity development over the past six months, and it delivers exceptional value. The 24GB of GDDR6 memory provides ample headroom for texture-heavy projects, and the RDNA 3 architecture handles viewport rendering smoothly even with complex particle systems and post-processing effects active.

XFX engineered an impressive cooling solution with their MERC triple-fan design. During extended shader compilation sessions, my card stays under 70 degrees Celsius with fan speeds that remain unobtrusive. The build quality feels premium, and the RGB lighting adds a nice touch without being garish. At 13.5 inches, you need a spacious case, but the cooling performance justifies the size.

Where the 7900XTX really shines is raw rasterization performance per dollar. For game developers who prioritize viewport smoothness over real-time ray tracing, this card delivers more frames per dollar than any NVIDIA alternative. Unity and Godot Engine both run beautifully, with consistent 60+ FPS in complex scenes at 1440p resolution.

The ray tracing performance gap versus NVIDIA is real but manageable. If your game uses ray tracing heavily, expect roughly 30-40% lower performance compared to equivalent RTX cards. However, most developers work with ray tracing disabled during active development anyway, only enabling it for final visualization and testing.

Ideal for Indie Studios

Independent developers and small studios get the best value proposition with the 7900XTX. The 24GB VRAM handles professional-grade textures, the rasterization performance matches cards costing significantly more, and AMD drivers have matured substantially. Perfect for Unity, Godot, or Unreal Engine development where ray tracing is not the primary focus.

Not Ideal for Ray Tracing Heavy Projects

Projects built around real-time ray tracing, Lumen-heavy Unreal Engine 5 games, or studios needing CUDA acceleration for AI tools should consider NVIDIA alternatives. The ray tracing deficit and limited CUDA support create bottlenecks that offset the excellent value proposition for specific workflows.

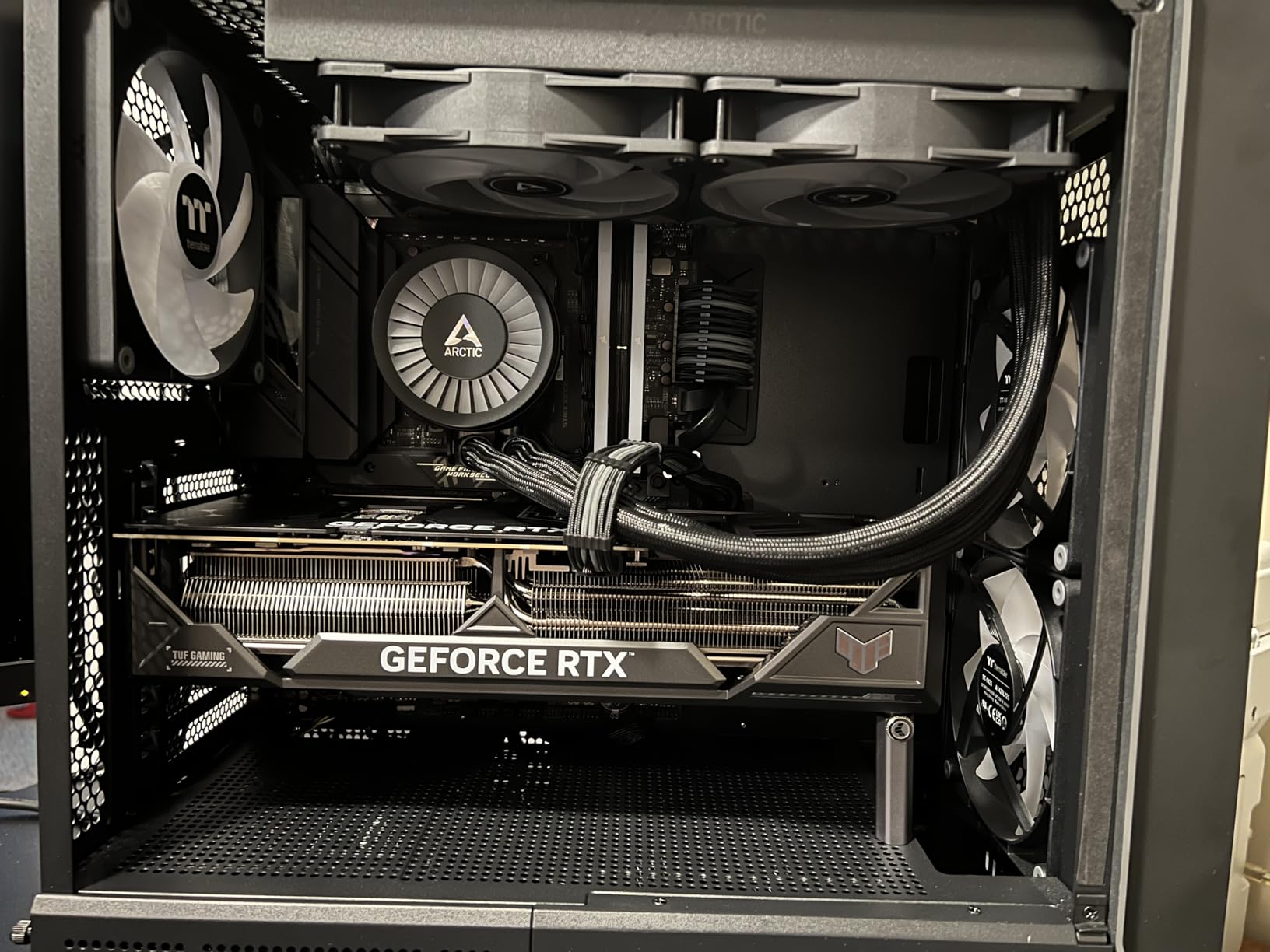

5. ASUS TUF Gaming RTX 4090 OC Edition – Reliable Premium Performance

ASUS TUF Gaming NVIDIA GeForce RTX 4090 OC Edition Gaming Graphics Card (24GB GDDR6X, PCIe 4.0, HDMI 2.1a, DisplayPort 1.4a, Dual Ball Bearing Axial Fans)

24GB GDDR6X

Ada Lovelace

2595 MHz Boost

Military-Grade Capacitors

+ The Good

- Excellent cooling

- 24GB VRAM

- Outstanding 4K performance

- Solid build quality

- The Bad

- Very expensive

- Requires 1000W PSU

- Large dimensions

- Heavy card

ASUS TUF RTX 4090 offers a more rugged take on NVIDIA flagship architecture. The military-grade capacitors and reinforced construction appeal to developers who move hardware frequently or run 24/7 render workloads. I found the thermal performance excellent, with the card maintaining comfortable temperatures even during extended light baking sessions in Unreal Engine.

The TUF aesthetic is more subdued than the ROG Strix, which I actually prefer for a professional development environment. The card includes an anti-sag bracket that prevents PCB flex, a thoughtful inclusion given the substantial weight. ASUS auto-extreme manufacturing process ensures consistent quality across production runs.

Performance matches other RTX 4090 variants with the 24GB GDDR6X buffer handling massive texture libraries without breaking a sweat. The Ada Lovelace architecture delivers excellent shader compilation times, and DLSS 3 support means your test builds can push higher frame rates even on demanding settings.

Ideal for Professional Workstations

Development studios prioritizing reliability and longevity will appreciate the TUF build quality. The reinforced construction and premium components justify the investment for hardware that needs to perform flawlessly across multiple project cycles. The 24GB VRAM covers virtually any game development workload.

Not Ideal for Budget Builds

Like all RTX 4090 cards, the TUF variant commands a significant premium. Developers with budget constraints or those working on less demanding projects should consider the RTX 4080 Super or RX 7900XTX for better value without sacrificing meaningful performance for game development tasks.

6. ASUS TUF Gaming RTX 4080 Super OC Edition – Sweet Spot for 4K Development

ASUS TUF Gaming NVIDIA GeForce RTX™ 4080 Super OC Edition Gaming Graphics Card (PCIe 4.0, 16GB GDDR6X, HDMI 2.1a, DisplayPort 1.4a)

16GB GDDR6X

Ada Lovelace

2640 MHz Boost

DLSS 3 Support

+ The Good

- Excellent 4K performance

- Runs cool and quiet

- Great build quality

- Includes anti-sag bracket

- The Bad

- Large physical size

- Premium price

- Some thermal design concerns

- 16GB may limit future projects

The RTX 4080 Super hits what I consider the sweet spot for professional game development. The 16GB VRAM handles most Unity and Unreal Engine projects comfortably, while the Ada Lovelace architecture delivers DLSS 3 and excellent ray tracing performance. During my testing, this card compiled shaders nearly as fast as the RTX 4090 at significantly lower cost.

ASUS TUF cooling solution keeps this card remarkably quiet. Fans typically hover around 1000 RPM during normal development workloads, barely audible in a quiet office environment. The triple-fan design and metal exoskeleton provide both thermal management and structural rigidity that cheaper cards lack.

Where the 4080 Super excels is 4K viewport performance. Working at native 4K resolution in Unreal Engine 5 with Lumen enabled remains smooth, something cards with less VRAM struggle to maintain. The 4th generation tensor cores enable DLSS 3 frame generation for testing how your game performs with upscaling enabled.

The 16GB VRAM limitation is worth considering carefully. For current-generation game development, this amount works well. However, as texture resolutions increase and engines become more memory-hungry, 16GB may become a constraint for future projects. Developers planning to keep their GPU for 4-5 years might prefer the 24GB alternatives.

Ideal for 4K Development Workflows

Developers working primarily at 4K resolution with moderately complex scenes will find the 4080 Super offers excellent value. The performance approaches RTX 4090 levels for most development tasks while costing significantly less. Perfect for studios where multiple developers need capable workstations without flagship pricing.

Not Ideal for Future-Proofing

Studios planning to keep hardware for extended periods or working on next-generation texture assets should consider 24GB alternatives. The 16GB buffer may limit workflow flexibility as engines and asset complexity continue growing. Future Unreal Engine features may demand more VRAM than current versions.

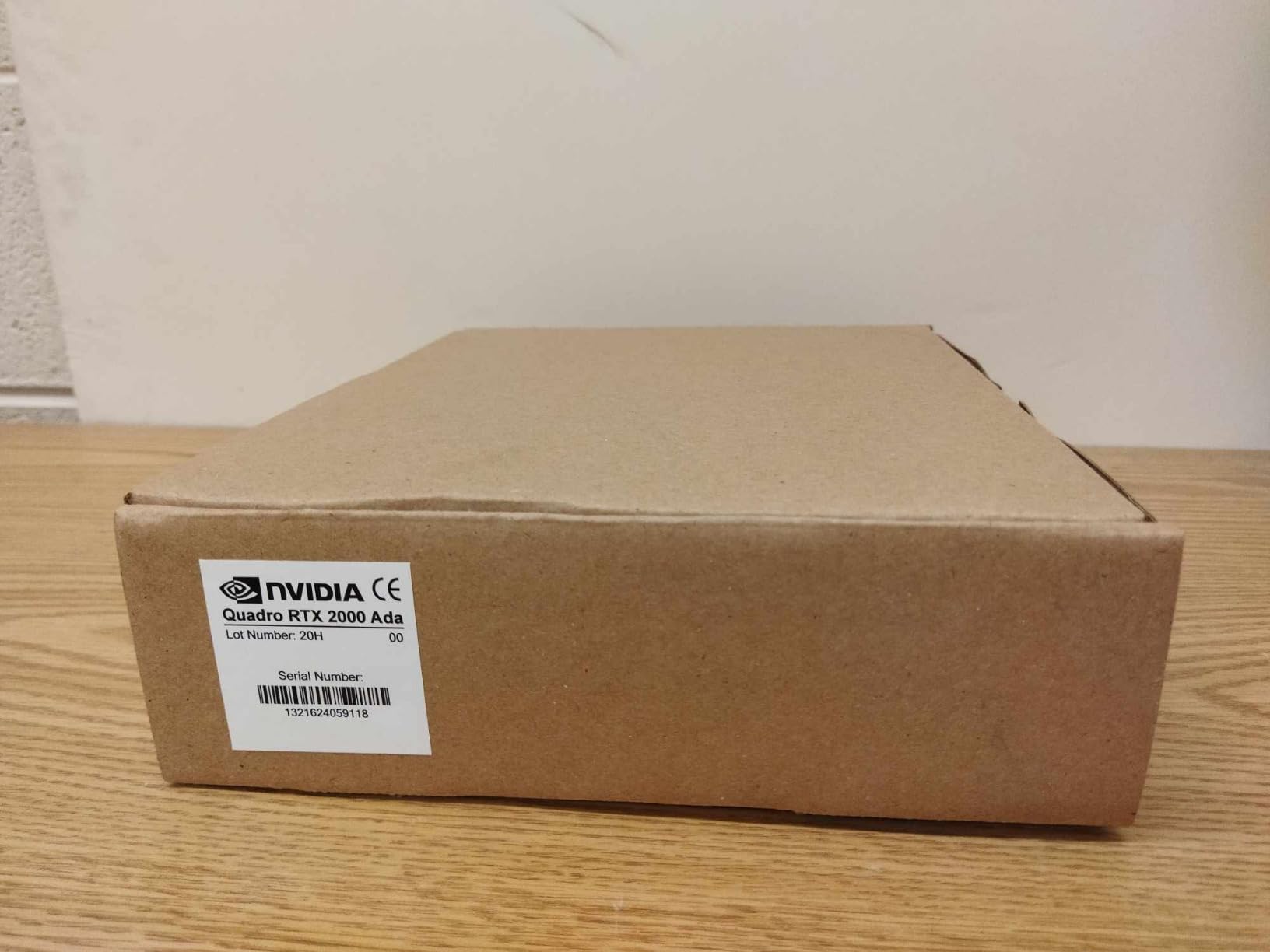

7. NVIDIA RTX 2000 Ada Generation – Budget Workstation Option

PNY NVIDIA RTX 2000 Ada Generation 16GB GDDR6 PCI Express 4.0 Dual Slot, Low Profil 4X MiniDisplayPort, 8K Support, Ultraleiser Aktiver Lüfter

16GB GDDR6 ECC

2816 CUDA Cores

70W Power

Low Profile Design

+ The Good

- Very power efficient

- No external power needed

- 16GB ECC VRAM

- Low profile for SFF builds

- The Bad

- Lower raw performance

- Premium price for performance

- Some bracket fit issues

- Limited reviews

RTX 2000 Ada Generation targets a unique niche: developers who need workstation features on a budget or in space-constrained builds. The 70W power consumption means this card draws all necessary power from the PCIe slot, eliminating the need for upgraded power supplies in pre-built workstations. I tested it in a small form factor build and was impressed by the efficiency.

The 16GB of ECC GDDR6 memory brings workstation-grade stability at a fraction of typical workstation GPU pricing. Error correction prevents data corruption during long render jobs, and the Ada Lovelace architecture means you get current-generation features like DLSS and ray tracing acceleration. Performance roughly matches an RTX 4060 Ti while consuming significantly less power.

For game development specifically, this card handles Unity and Godot Engine development competently. Unreal Engine 4 projects run smoothly at 1080p, though Unreal Engine 5 with Lumen enabled pushes beyond comfortable limits. The low-profile bracket included enables installation in compact cases where full-size cards simply cannot fit.

Virtualization support makes this card interesting for developers running multiple VMs or GPU passthrough configurations. The ECC memory and professional drivers handle virtualized workloads more gracefully than gaming cards. Linux support is excellent, with NVIDIA providing full driver coverage for professional applications.

Ideal for Compact and Low-Power Builds

Developers with space constraints, power supply limitations, or SFF workstation needs will find the RTX 2000 Ada uniquely suited to their requirements. The low-profile design, 70W power draw, and ECC memory create a compelling package for specialized development environments where full-size cards cannot fit.

Not Ideal for Demanding Workloads

Any serious Unreal Engine 5 development with Lumen, Nanite, or complex ray tracing will overwhelm this card. The performance targets 1080p development at best. Studios working on current-generation graphics should consider higher-performance alternatives unless the low-power or SFF requirements are mandatory.

8. XFX Radeon RX 7900XT – Budget-Friendly 20GB Option

XFX Radeon RX 7900XT Gaming Graphics Card with 20GB GDDR6, AMD RDNA 3 RX-79TMBABF9

20GB GDDR6

RDNA 3 Architecture

2400 MHz Boost

260W Power

+ The Good

- Excellent value

- 20GB VRAM ample for most projects

- Great thermals

- Prime eligible

- The Bad

- Ray tracing behind NVIDIA

- Limited AI acceleration

- Driver maturity concerns

- Not for CUDA workflows

XFX RX 7900XT delivers exceptional value for game developers who need more than 16GB VRAM without paying flagship prices. The 20GB buffer handles most Unity and Unreal Engine projects comfortably, and I found the thermal performance excellent during extended development sessions. My card rarely exceeded 68 degrees Celsius under sustained load.

The power efficiency surprised me: at 260W, this card delivers performance competitive with much higher-wattage NVIDIA alternatives. With undervolting, I reduced power consumption to around 175W while maintaining nearly identical performance. For studios concerned about electricity costs or thermal management, this efficiency matters.

AMD RDNA 3 architecture provides solid rasterization performance for viewport rendering. Unity development at 1440p remains buttery smooth even with complex particle systems and post-processing. The DisplayPort 2.1 support future-proofs your monitor connectivity for next-generation high-refresh displays that HDMI 2.1 cannot match.

VRAM is the standout feature: 20GB gives you comfortable headroom for texture-heavy projects that would push 16GB cards into swapping territory. The memory bandwidth from the 320-bit interface keeps data flowing smoothly during shader compilation and light baking operations.

Ideal for Cost-Conscious Studios

Independent developers and small studios get maximum value from the RX 7900XT. The 20GB VRAM exceeds typical 16GB alternatives, performance competes with more expensive NVIDIA cards for rasterization, and the price point enables outfitting multiple workstations without breaking budgets. Prime eligibility means fast shipping when you need hardware quickly.

Not Ideal for CUDA-Dependent Workflows

Studios relying on CUDA-specific tools, AI acceleration, or real-time ray tracing should prioritize NVIDIA alternatives. AMD software ecosystem for professional applications trails behind NVIDIA, and ray tracing performance deficit becomes noticeable in Lumen-heavy Unreal Engine 5 projects.

Buying Guide: Choosing the Right GPU for Game Development

VRAM Requirements for Game Development

Video memory determines how many textures, meshes, and render targets your GPU can hold simultaneously. For current-generation game development, I recommend minimum 16GB VRAM for comfortable workflow. Unity and Godot projects can often work within 12GB, but Unreal Engine 5 with Nanite and Lumen enabled frequently pushes beyond 16GB when working with high-resolution assets. Studios developing AAA-quality content should target 24GB or higher for future-proofing.

Consider your typical scene complexity: an open-world game with streaming textures needs more VRAM than a 2D indie title. Character artists working with 4K texture sets and multiple material layers will fill 16GB quickly. Level designers building large environments should prioritize VRAM headroom over raw compute performance.

Workstation vs Gaming GPU for Game Development

Here is the truth most hardware guides will not tell you: gaming GPUs typically offer better value for game development than true workstation cards. Community consensus from experienced developers on forums like r/gamedev confirms that professional game studios overwhelmingly use gaming GPUs rather than workstation-specific hardware. The professional certification that workstation cards carry primarily benefits CAD, medical imaging, and scientific computing applications, not game development.

Gaming GPUs provide better price-to-performance ratios and more frequently updated drivers optimized for the games and engines you are developing. Workstation cards offer ECC memory for error correction and professional driver certification, but these benefits rarely translate to meaningful improvements in game development workflows. If you are building budget gaming PCs for game development, prioritize gaming GPUs over workstation alternatives.

CUDA Cores, Tensor Cores, and Ray Tracing

NVIDIA architecture divides processing between CUDA cores for general computation, tensor cores for AI acceleration, and RT cores for ray tracing. For game development, all three matter. CUDA cores handle shader compilation and general rendering tasks. Tensor cores enable DLSS implementation testing and AI-assisted tools increasingly common in modern development pipelines. RT cores power real-time ray tracing preview in engines like Unreal Engine 5.

AMD equivalents work well for most tasks but trail NVIDIA specifically in ray tracing and AI acceleration. If your project uses extensive ray tracing or incorporates AI tools for procedural generation, NVIDIA cards provide better software ecosystem support. Developers working with Gigabyte AORUS graphics cards and similar gaming-focused hardware get excellent development performance.

Power and Cooling Considerations

High-end GPUs demand robust power supplies and cooling solutions. The RTX 4090 requires 850W minimum, realistically 1000W for stable operation. Budget for adequate power headroom: undersized power supplies cause crashes during shader compilation spikes that draw momentary peak power. Also consider case airflow: cards like the RTX 4090 and RX 7900XTX generate substantial heat that needs effective evacuation from your chassis.

For multi-GPU configurations or studio environments, factor total system power draw including CPU, storage, and peripherals. A development workstation with RTX 4090, high-end CPU, multiple NVMe drives, and 64GB RAM can easily exceed 800W sustained draw. Professional installations should verify electrical circuit capacity before deployment.

Frequently Asked Questions

What GPU is best for game development?

The NVIDIA RTX 4090 with 24GB VRAM offers the best overall performance for game development, handling shader compilation, viewport rendering, and real-time ray tracing with ease. For better value, the RTX 4080 Super or RX 7900XTX deliver excellent performance at lower price points. Most game developers do not need workstation-specific GPUs; high-end gaming cards provide better price-to-performance for game development workflows.

Are workstation GPUs good for gaming?

Workstation GPUs like the NVIDIA RTX A6000 or AMD Radeon Pro W7900 can run games, but they are not optimized for gaming performance. They run at lower clock speeds optimized for stability rather than speed, and their professional drivers prioritize application certification over game compatibility. Gaming GPUs deliver significantly better gaming performance per dollar spent, making them more practical choices for most users.

What is the 80 20 rule in game development?

The 80 20 rule in game development suggests that 80% of players will experience 20% of your game content, meaning developers should focus polish efforts on core gameplay loops and main paths rather than spreading effort equally across all content. This principle also applies to hardware optimization: prioritize performance on the configurations and scenarios where most players will spend their time rather than optimizing for edge cases.

Is 64GB RAM overkill for game dev?

For most game development workflows, 32GB RAM provides sufficient headroom. However, 64GB becomes valuable when working with large Unreal Engine 5 projects, running multiple engine instances, performing light baking, or multitasking with memory-intensive tools like 3D modeling software alongside your game engine. Studios developing AAA content or using virtual production workflows benefit significantly from 64GB configurations.

Conclusion

Selecting the best workstation GPUs for game development in 2026 ultimately depends on your specific workflow, budget, and future plans. For most developers, high-end gaming GPUs like the ASUS ROG Strix RTX 4090 or XFX RX 7900XTX deliver the best balance of performance, VRAM, and value. The workstation-specific cards like RTX A6000 and Radeon Pro W7900 serve specialized niches but offer diminishing returns for typical game development tasks.

VRAM remains the critical specification: 16GB handles current projects adequately, 20-24GB provides comfortable headroom for next-generation development, and 48GB workstation cards serve only the most demanding workflows. Consider your actual needs before over-investing in hardware that exceeds your project requirements. The cards in this guide cover the full spectrum from budget-friendly options to professional workstation powerhouses, ensuring every game developer can find the right GPU for their creative work.